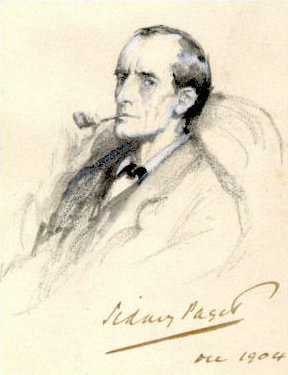

Whenever I am feeling stressed out in the evening, I re-read something old, familiar, and soothing. For me, that is often the Sherlock Holmes stories. I read a story here, a story there, and pretty soon I’ve worked my way through the entire collection. Again.

On each iteration, though, I discover something new. Recently, I re-read Doyle’s 1904 story, “The Adventure of Charles Augustus Milverton.” It’s one of the lesser known of the Sherlock tales, which is ironic because it’s also one of the best. Part of the reason for its obscurity is due, I suspect, to its boring title (more of a “label” than an actual title). I mean, if you had a choice between reading a story called “The Adventure of Charles Augustus Milverton” or “The Hound of the Baskervilles,” which would you pick?

Anyway, I really admire this story, for a number of reasons. Its titular character, Milverton, is a As for Milverton, we learn that he is a master blackmailer, who funds his lavish lifestyle by obtaining compromising letters (what we would now call kompromat) written by rich, aristocratic ladies. He buys the letters from treacherous servants in the ladies’ households, then uses them to extort ruinous sums from the lady in question, often pouncing right before her wedding to some duke or count or whatever. Holmes explains all this to his friend Watson in 221-B Baker Street as they wait for titular villain to stop by. (Milverton’s latest target, an unnamed rich lady, has hired Holmes to get her letters back.)

It’s a very similar premise to another of Doyle’s great stories, “A Scandal in Bohemia,” which I wrote a post about some years ago entitled “Sherlock Holmes was a Fixer”. As in that prior story, Holmes serves as more of a trouble-shooter than a traditional detective here, using his intellect and knowledge of the underworld to sort out a client’s problems. More importantly, in both stories Holmes finds himself compelled to break the law in order to get his client out of trouble. In “The Adventure of Charles Augustus Milverton,” he disguises himself as a working-class tradesman in order to infiltrate Milverton’s household. As Holmes explains to Watson after the fact:

“You would not call me a marrying man, Watson?”

“No, indeed!”

“You’ll be interested to hear that I’m engaged.”

“My dear fellow! I congrat——”

“To Milverton’s housemaid.”

“Good heavens, Holmes!”

“I wanted information, Watson.”

“Surely you have gone too far?”

“It was a most necessary step. I am a plumber with a rising business, Escott, by name. I have walked out with her each evening, and I have talked with her. Good heavens, those talks! However, I have got all I wanted. I know Milverton’s house as I know the palm of my hand.”

“But the girl, Holmes?”

He shrugged his shoulders. “You can’t help it, my dear Watson. You must play your cards as best you can when such a stake is on the table. However, I rejoice to say that I have a hated rival, who will certainly cut me out the instant that my back is turned. What a splendid night it is!”

I love that line, “You can’t help it, my dear Watson.” It seems to encapsulate the great theme of the noir and hard-boiled detective fiction that would emerge later in America. Namely, how do you fight evil effectively without becoming evil yourself? The answer, of course, is that you can’t. Not completely. As the morally expedient Sam Spade says to his similarly disapproving secretary in The Maltese Falcon, “That’s just the way it is, dear.”

Speaking of The Maltese Falcon, I would bet that Dashiell Hammett read (and re-read) “The Adventure of Charles Augustus Milverton.” The villain of Hammett’s novel, Gutman (played so with such risible gusto by Sydney Greenstreet in the 1941 film version), seems like a direct descendent of Doyle’s Milverton. As Doyle describes him,

Charles Augustus Milverton was a man of fifty, with a large, intellectual head, a round, plump, hairless face, a perpetual frozen smile, and two keen gray eyes, which gleamed brightly from behind broad, gold-rimmed glasses. There was something of Mr. Pickwick’s benevolence in his appearance, marred only by the insincerity of the fixed smile and by the hard glitter of those restless and penetrating eyes. His voice was as smooth and suave as his countenance, as he advanced with a plump little hand extended, murmuring his regret for having missed us at his first visit. Holmes disregarded the outstretched hand and looked at him with a face of granite. Milverton’s smile broadened, he shrugged his shoulders, removed his overcoat, folded it with great deliberation over the back of a chair, and then took a seat.

Now look at Hammett’s introduction of Gutman…

The fat man was flabbily fat with bulbous pink cheeks and lips and chins and neck, with a great soft egg of a belly that was all his torso, and pendant cones for arms and legs. As he advanced to meet Spade all his bulbs rose and shook and fell separately with each step, in the manner of clustered soap-bubbles not yet released from the pipe through which they had been blown. His eyes, made small by fat puffs around them, were dark and sleek. Dark ringlets thinly covered his broad scalp. He wore a black cutaway coat, black vest, black satin Ascot tie holding a pinkish pearl, striped grey worsted trousers, and patent-leather shoes.

Note how both villains are presented as soft, one way or another. That is, both are overweight, (Milverton is just “plump” while Gutman is “flabbily fat”), their girth symbolizing not only their greed but their apparent harmlessness. Which makes them all the more dangerous, of course. People underestimate them. They want people to underestimate them. In the same way, both are impeccably mannered and well dressed, with a bit of the dandy about them. The only really threatening thing about them, on the surface, is their compelling eyes (Milverton’s are “hard,” “keen” and penetrating; Gutman’s are “dark and sleek”).

Yet both are formidable opponents, both physically and intellectually. Milverton, for his part, moves “quick as a rat” when Holmes and Watson try to use physical force against him, drawing a revolver to defend himself. He is so capable, in fact, that Holmes resorts to simple robbery in hope of retrieving the kompromat. After seducing Milverton’s hapless maid, he explains how he plans to break into the man’s library and crack his safe.

As in all noir fiction, Holmes’s corruption is contagious. It spreads. Watson insists on coming along on the caper, and soon finds himself enjoying it. As he relates,

My first feeling of fear had passed away, and I thrilled now with a keener zest than I had ever enjoyed when we were the defenders of the law instead of its defiers. The high object of our mission, the consciousness that it was unselfish and chivalrous, the villainous character of our opponent, all added to the sporting interest of the adventure.

Naturally, the operation goes pear-shaped. After breaking into Milverton’s library, Milverton returns to the room and they are forced to hide behind a tapestry. They listen, helpless, as the man conducts a clandestine, midnight meeting with an unknown woman, one whom he assumes to be another disgruntled maid but who is actually one of his former victims. The lady shoots him dead, then flees. Holmes, having already cracked Milverton’s, tosses all the letters into the fire, after which he and Watson do a runner, barely escaping the dead man’s enraged house staff.

With the main plot essentially over, the story still has a couple of surprises in the last pages. Indeed, there is a bit of transgressive humor near the end, when Holmes and Watson are visited the next morning by Inspector Lestrade, of Scotland Yard, seeking their help in a puzzling new case that has arisen overnight—namely, the burgling and murder of one Thomas Milverton! As Lestrade reports, there were two criminals involved in the matter.

“Criminals?” said Holmes. “Plural?”

“Yes, there were two of them. They were as nearly as possible captured red-handed. We have their footmarks, we have their description, it’s ten to one that we trace them. The first fellow was a bit too active, but the second was caught by the under-gardener, and only got away after a struggle. He was a middle-sized, strongly built man—square jaw, thick neck, moustache, a mask over his eyes.”

“That’s rather vague,” said Sherlock Holmes. “My, it might be a description of Watson!”

“It’s true,” said the inspector, with amusement. “It might be a description of Watson.”

This wonderful black comedy is, of course, another way the story pre-sages noir fiction. Holmes, like Sam Spade or Philip Marlowe, takes gleeful delight in outwitting the cops, even though he has no real animus towards them. In some ways, Holmes is a prototype of those later detectives. Like them, he is an island unto himself, obeying the law when possible but otherwise following his own, internal, moral code, no matter where it might lead.

It’s a very modern, almost existentialist version of Holmes, one that is seldom seen in the pop-cultural depictions of him.

Not bad, for a story that’s over a century old.