It was a late-summer day in 1990. I was teaching two sections of English Composition 101 at the University of Arizona, and it was the first day of the semester. My second class was in the afternoon, three o’clock. Being a very young Teaching Assistant, I had dressed in my best slacks, dress-shirt, tie, and loafers in a rather comical effort to earn my students’ respect (or, at least, their forbearance). My outfit was also completely inappropriate to the 100-degree-plus temperatures outside, which I felt even more than usual because my classroom for that section was across campus, so I had to schlep it from the dark, air-conditioned office that I shared with a two-dozen other T.A.’s in the basement of the Modern Languages building.

The first class went well. I liked the students, and they seemed to like me. When class was over, I gathered my things into my backpack and headed out. The moment I stepped outside, though, I knew I wasn’t going anywhere. A late summer rainstorm—monsoons, as they are called out there—had struck. I went back into the building and waited until the rain finally stopped. Unfortunately, Tucson, like every other desert city, is prone to flooding, and I knew the streets and even the sidewalks would be swamped for hours. Not wanting to wait that long, I took off my jacket and put it in my backpack. I did the same with my socks and shoes, then rolled up the cuffs on my slacks. Thus barefoot, I ventured out, sloshing my way across campus and then out into the little urban neighborhood where I had parked my car.

I didn’t mind, in part because I had something to read, a paperback copy of Roger Penrose’s new book The Emperor’s New Mind. Yes, it’s one of those rare books that, even in chapter one, become so engrossing that one will read while walking through the streets after a monsoon, barely noticing the cold water that’s up to your ankles. I held it in front of me as I walked the familiar route, absorbed in the slow, methodical, yet miraculous argument that Penrose was weaving, which is simple yet dumbfounding: There is something non-mechanical (that is, non-algorithmic) about consciousness.

That is, our brains are not merely “machines made of meat,” to paraphrase the words of AI pioneer Marvin Minski. Minksi is, of course, a founding proponent of the “Hard-AI” theory of computer science, which states that the brain is really just a very complicated computer, which, though made of biological parts, is nonetheless executing an algorithm. In the future—the theory goes—when digital computers become sophisticated enough to execute this mysterious algorithm, they will become “conscious,” too. Just like us. At this point in our technological evolution (the vaunted Singularity), machine consciousness and human consciousness will blur together, such that human beings might wish to “upload” their consciousness (i.e., all their thoughts, memories, desires, etc.) to a computer as digital data, and thus achieve immortality in cyberspace.

It’s an idea that would have seemed absurd—if not incomprehensible—a hundred years ago, but which has been gaining traction since the 1960s. Now, in the age of Generative AI—whose power really does seem miraculous, at time—the notion seems almost a given. A fait accompli.

Yet, if you’re like me, you’ve thought to yourself: This is all bullshit. The human mind is not a computer; and computers—as we now understand them—will never be conscious. To suggest otherwise is a category error.

However, you’ve probably kept this thought to yourself (deep inside your consciousness, as it were, heh heh) for fear of being ridiculed by the tech-bros and computer nerds in your office or classroom or wherever. Guys who not only completely subscribe to the Hard-AI theory, they read (and sometimes write) sci-fi novels about it. They not only believe in the Singularity; they look forward to it!

Not me. Whenever I hear one of these bros blathering on about Skynet becoming self-aware or uploading their consciousness to a computer, I just say, “Roger Penrose says it’s impossible.” At which point the bro in question will usually give me a befuddled look, as if to say, Who the fuck is Roger Penrose? Which is, of course, a kind of tragedy in and of itself. I usually answer: “Roger Penrose was Stephen Hawking’s partner in theoretical physics.” That shuts the bro up for a bit. After all, anyone smart enough to hang with Stephen Hawking is surely a force to be reckoned with. You can’t as easily dismiss a theoretical physicist of that stature, even when he is opining on a subject—computers—that might seem a bit outside his field.

In fact, thinking about thinking is right up Penrose’s alley, so to speak. He’s not just a theoretical physicist; he’s a mathematical theoretical physicist, which means that he’s is a world-class mathematician whose creations are designed to help us understand cosmology, particle theory, etc. He shared the Wolf Prize in Physics with Stephen Hawking for their work on the Penrose–Hawking singularity theorems, and he is the discoverer of the first known aperiodic tile, the now-famous Penrose Tiling. (Many other aperiodic tiles have been discovered since, at least one of which I have posted about.) His other accomplishments are too numerous to mention.

So, Penrose brings a considerable amount of street cred when he finally makes his main assertion in the book—namely, that there is some as-yet unknown quality about consciousness that computers do not possess, and probably never will. He writes, “…there must be something essentially non-algorithmic about consciousness.” He further writes,

When I assert my own belief that true intelligence requires consciousness, I am implicitly suggesting (since I do not believe the strong-AI contention that the mere enaction of an algorithm would evoke consciousness) that intelligence cannot be properly simulated by algorithmic means, i.e. by a computer, in the sense that we use that term today.

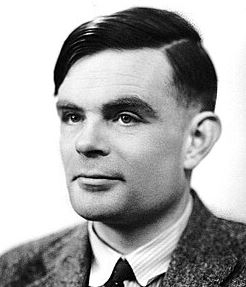

He doesn’t fully come out and say until last few chapters of this (very long) treatise. The bulk of the book is dedicated to the evidence he has for his belief, beginning with discussion of Turing Machines. There are a lot of great videos about Turing Machines on YouTube, but suffice to say that it is a very simple machine imagined by Alan Turing (yeah, Benedict Cumberbatch played him in the movie) in the 1930s. It consists solely of a reading head through which a long—infinitely long, if need be—magnetic tape is run back and forth. A mechanism inside the head is capable of a few simple operations: it can move the tape forward/backward X places; it can read a symbol off the tape in the current position; it can write a symbol to the current position; and it can store the last symbol read in a single variable (called a “state” by Turing).

If this sounds vaguely familiar, it should. A Turing Machine is basically an abstract, idealized version of a modern, programmable computer. If you replace the magnetic tape with a hard drive, you essentially have a modern computer—albeit one with only 1 byte of RAM (or thereabouts) and a very simple CPU. Even so, with a sufficient amount of tape, a Turing Machine could run any computer program in existence, even those used currently by AI networks. (It would, of course, do so very, very slowly.)

With this in mind, Turing set out to determine whether one could, eventually, make a Turing Machine that could solve any math problem. That is, could the science of mathematics ever become so advanced, so perfect, so complete, that one could use it to write an algorithm (which would be encoded on the tape) that could decide if any mathematical statement (also encoded on the tape) were true or false.

If so, then one would never need another, more advanced calculator. It would be a universal computational device.

Sadly (or, rather, happily, in my opinion), Turing was able to prove definitively that this was not possible. One can never—not in a million years—devise an algorithm so smart it can decide the truth or falsity of any math problem. The logic he used to prove this fact is encapsulated in a scenario now called the Halting problem (on which there is at least one excellent video on Youtube here).

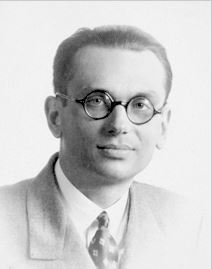

Turing’s proof was the final nail in the coffin of David Hilbert’s Entscheidungsproblem, which posed the question of whether it was possible to make a perfect, complete mathematical algorithm. Two-thirds of the question had already been solved (in the negative) by Kurt Gödel’s Incompleteness theorems, which rocked the worlds of math and logic in 1931. Penrose also delves into these theorems at great length, demonstrating how Gödel proved that no set of axioms can prove the truth or falsity of any mathematical statement. In other words, no single set of statements (no algorithm), no matter how large or sophisticated, will ever be able to solve every math problem.

Penrose’s main point, however, goes beyond even this revelation. He asserts that Gödel’s theorems, by proving that no single algorithm can solve any problem, could not, themselves, be the product of any algorithm system! In other words, Gödel was not, himself, a computer running some incredibly elaborate, highly-evolved “consciousness algorithm.” Rather, Mr. Gödel, like all conscious beings, was…something else. Penrose writes,

Let us recall the arguments given in Chapter 4 establishing Gödel’s theorem and its relation to computability. It was shown there that whatever (sufficiently extensive) algorithm a mathematician might use to establish mathematical truth – or, what amounts to the same thing,1 whatever formal system he* might adopt as providing his criterion of truth – there will always be mathematical propositions, such as the explicit Gödel proposition Pk (k) of the system…, that his algorithm cannot provide an answer for. If the workings of the mathematician’s mind are entirely algorithmic, then the algorithm (or formal system) that he actually uses to form his judgements is not capable of dealing with the proposition Pk (k) constructed from his personal algorithm. Nevertheless, we can (in principle) see that Pk(k) is actually true! This would seem to provide him with a contradiction, since he ought to be able to see that also. Perhaps this indicates that the mathematician was not using an algorithm at all!

But if Gödel’s brain isn’t just a computer, what is it? Where does the magic of consciousness come from?

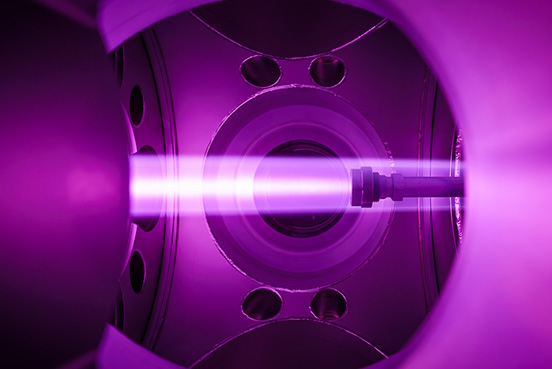

Well, nobody knows—not even Penrose himself, as he readily admits. Unsurprisingly, he does not reach for some mystical, supernatural answer (as I, ultimately, do). Rather, he argues—very convincingly—that consciousness might have some relationship to quantum mechanics. That is, there might well be some quantum mechanical aspect to minds, both human and animal.

This is not to say, of course, that quantum mechanics (QM) necessarily causes consciousness. Rather, Penrose merely suggests that there is some deep relationship between QM and consciousness. It’s a pretty cool idea, which he spends the last fifth of the book elaborating.

When the book came out, critics immediately howled that Penrose is not a neurologist, nor an expert on the human brain, and that no one has (yet) found a truly quantum mechanical action in the brain. Still, there is something incredibly seductive—even uplifting, I would say—to the idea there really is something “magic” about us, our experience of life, which is still inexplicable to science. Still defensible, that is, from the brutal, deterministic nihilism of the New Atheists and the hard-core scientific materialists.

At least, I think so. Check it out.